French / Français

La dabkeh, danse traditionnelle en Palestine, inscrite au patrimoine culturel immatériel de...

Parmi les autres éléments inscrits, on peut citer le carnaval d’été de Rotterdam, aux Pays-Bas ; la saison d'alpage, en Suisse ; Al-man’ouché, une...

Relative amélioration du moral des ménages au premier trimestre 2024

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Le moral des ménages a connu une relative amélioration au titre du premier trimestre de 2024, a annoncé le Haut-Commissariat...

GLOBAL DIASPORA NEWS

Man Arrested in Paris After Iran Consulate Incident

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

French police on Friday arrested a man who had threatened to blow himself up at Iran’s consulate in Paris, but on...

Power outage sparks days of protest in Joburg

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Main Road in Noordgesig was blocked by residents protesting over an extended electricity...

African Diaspora Leaders

Again, Honoring August Wilson: Holding Hallowed Cultural Ground

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

By Dr. Maulana Karenga —

In this month of remembering, reading and raising up the work and life of August Wilson (April...

Dr. William Strickland, scholar and political activist

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

By Herb Boyd —

“William Strickland embraced the challenge of the writing of Malcolm’s life and he did so in spite of...

Rev. Dr. Freddie Haynes Resigns from New Presidency of Rainbow/PUSH Coalition

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Renowned Pastor Just officially assumed the reins from Rev. Jesse Jackson in February.

By Trice Edney News Wire —

Less than a year...

A Conversation with Dr. Ron Daniels aka the Professor

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Powered by Black World Media Network

April 15, 2024Special WBAI Fund Drive Edition of Vantage Point

A special WBAI fund drive edition of...

Concerning Israel, the US, Europe and Hitlerian Havoc — By Dr. Maulana Karenga

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

By Dr. Maulana Karenga —

As we witness and work and struggle to end the Israeli genocidal war against the Palestinian people,...

BUSINESS, FINANCE & ECONOMY

Immigration, Brain Drain & Refugees

20,000 People Displaced Daily One Year into Sudan War, IOM Urges...

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Geneva/ Port Sudan – A staggering 20,000 people are forced to flee their homes in Sudan each day, half of them children,...

Philanthropy, Fundraising & Humanitarian

$2.8 billion appeal for three million people in Gaza, West Bank

The development came amid reports of ongoing Israeli bombardment of the Gaza Strip including Gaza City in the north, Rafah in southern Gaza and...

Gaza: rights experts condemn AI role in destruction by Israeli military

“Six months into the current military offensive, more housing and civilian infrastructure has now been destroyed in Gaza as a percentage, compared to any...

First Person: ‘I no longer amount to anything’ – Voices of...

He and others spoke to Eline Joseph, who works for the International Organization for Migration (IOM) in Port-au-Prince with a team which provides psychosocial...

Rape, murder and hunger: The legacy of Sudan’s year of war

Suffering is growing too and is likely to get worse, Justin Brady, head of the UN humanitarian relief office, OCHA, in Sudan, warned UN...

Wave of increased food insecurity hits West and Central Africa

This is a four million increase in the number of people currently dealing with food insecurity in that region.Mali is facing the worst situation...

World News in Brief: Looming famine threat for Sudan, 3.3 million...

After nearly a year of brutal civil war between rival militaries, food production has been hit and communities face acute shortages of other essential...

OTHER LANGUAGES (SPANISH, FRENCH, INDONESIAN, BRAZILIAN, ETC.)

Ciclo de retaliação no Oriente Médio deve acabar, diz chefe da...

Após relatos de ataques israelenses no Irã, perto de uma usina nuclear, nesta sexta-feira, o secretário-geral da ONU, António Guterres, fez um novo apelo...

Israel-Palestina: Mientras aumenta el número de heridos en Gaza, un nuevo...

La guerra continúa en la Franja de Gaza con intensos bombardeos aéreos y ataques terrestres israelíes que causan numerosos muertos y heridos entre la...

PROPHETIC NEWS

Sustainability, Climate & Environment

Embrace innovation ‘to make sustainable transport a reality for all’

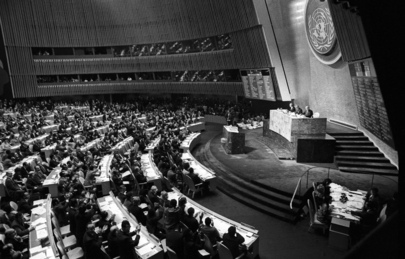

Opening its High-level Meeting on Sustainable Transport, Assembly President Dennis Francis urged countries to seize the opportunity to shape a green, inclusive and prosperous...

SCIENCE, TECHNOLOGY & RESEARCH

Conflict, Peace & Security

Lifestyle, Beauty, Culture & Opinion

Dengue in the Americas – Level 1 – Level 1 –...

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Dengue is a disease caused by a virus spread...

Access Denied

Photo credit: DiasporaEngager (www.DiasporaEngager.com).

Access Denied

You don't have permission to access "http://www.fda.gov/safety/recalls-market-withdrawals-safety-alerts/infinite-herbs-llc-expands-recall-fresh-organic-basil-include-melissas-brand-organic-basil-received?" on this server.

Reference #18.c587645f.1713550264.1333c924

https://errors.edgesuite.net/18.c587645f.1713550264.1333c924

Source of original article: Centers for Disease Control...